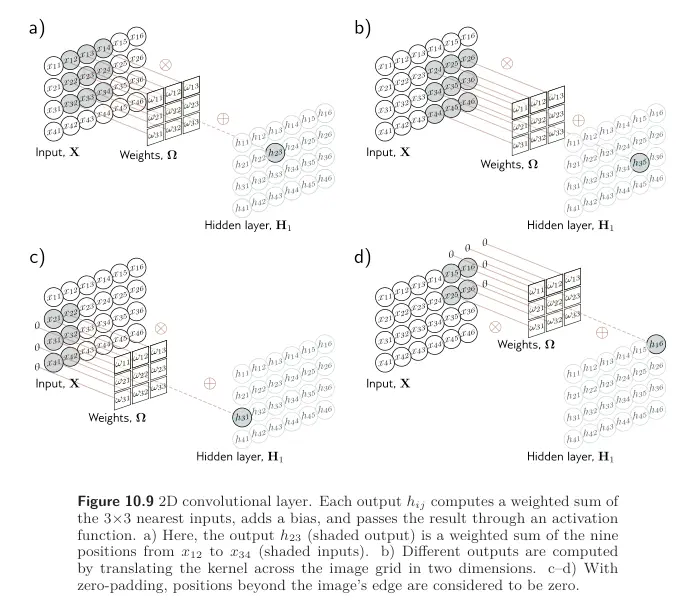

We’ve seen how 1D Convolution works. A popular use case of convolutions is for 2D image data, such that the kernel becomes a 2D object.

A kernel is applied to a 2D input comprising of elements computes a single layer of hidden units as:

where are the entries of the kernel. This is just a weighted sum over a square input region. The kernel is slid over the input to create an output at each position.

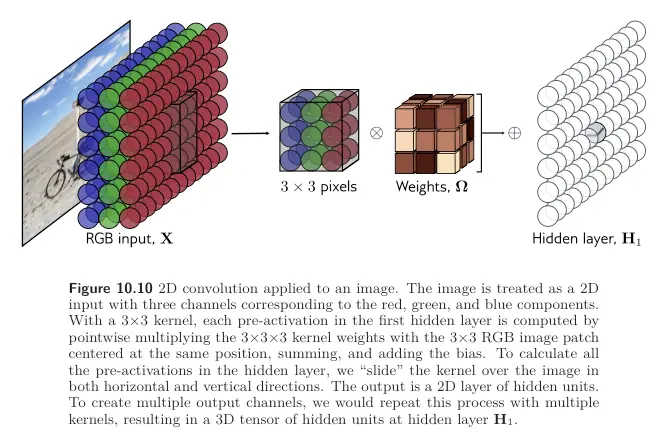

RGB Images

When our input is an RGB image, we treat it as a 2D signal with 3 channels corresponding to each colour. A kernel would have weights and be applied to the 3 input channels at each of the position to create a 2D output that is the same size as the input image (assuming zero-padding).

To generate multiple output channels, we repeat this process with different kernel weights and append the results to form a 3D tensor.

- If the kernel is size and there are input channels, each output channel is a weighted sum of quantities plus one bias.

- Thus, to compute output channels, we need weights and biases.

The resulting output dimension is given by: