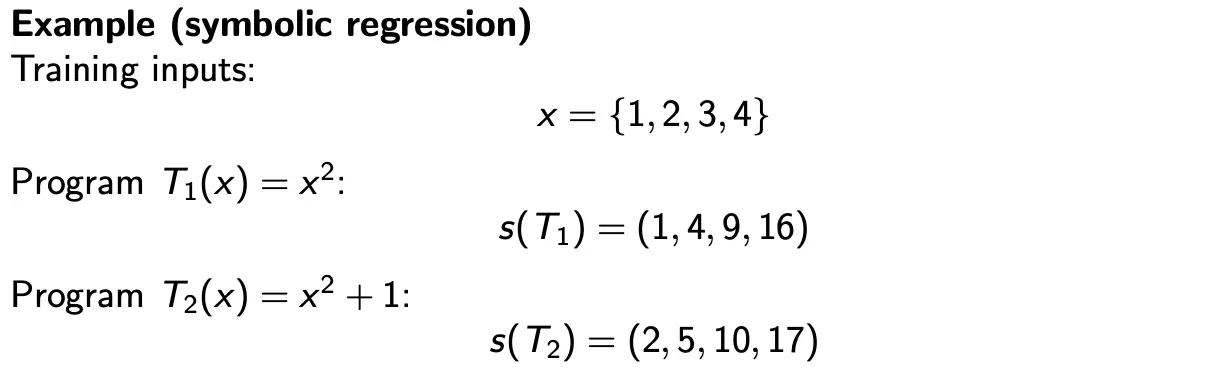

Traditional GP evolves syntax (program structure). Geometric semantic GP evolves semantics (program behavior). The core idea is that we represent each program by its output vector on a dataset:

Then, evolution operates in a vector space where distances reflect behavioral differences. Operators become geometrically meaningful, and the fitness landscape becomes smoother.

Basically, instead of thinking of the program processing, we just view it by what it outputs. Then, each program is a point in , and the distance between programs is the difference in their outputs.

Suppose two parent programs produce the following outputs on four training points:

A semantic operator may construct an offspring behavior is some combination of the two:

The child behaves “between” parents on the training set, making the fitness landscape smoother. Local improvements in semantics also become easier to exploit. However, the price we pay is that explicit program representations can grow very quickly unless special simplification mechanisms are used.