AS is a basic form of ant colony optimization.

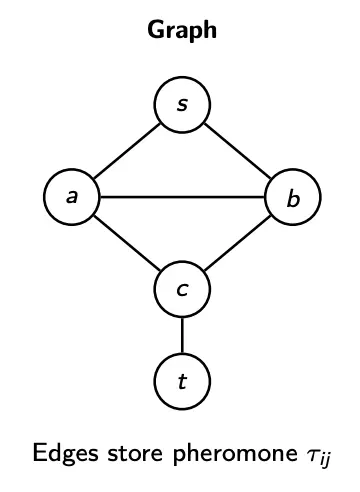

Let us consider a graph search problem. We operate on a graph , where nodes are locations, and edges are possible moves/transitions. A solution is a path or tour, constructed step-by-step. An example problem would be to find the shortest path from to .

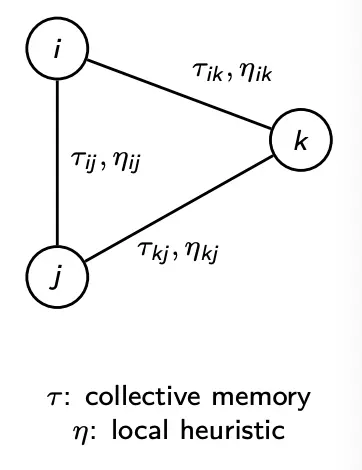

Information Storage

With ACO, we have many agents constructing paths on a graph with shared edge memory. Decisions combine a learned desirability and a local desirability .

- Pheromone memory: Each edge has a pheromone level . High means that this edge often appears in good solutions. Pheromone is shared across the colony (collective memory).

- Heuristic information: Each edge also has a local desirability . For example, for distances.

Heuristic design

Note that the common choice in routing/TSP is . Short edges are locally attractive, and thus if is large, ants will behave more greedily in this local manner.

In general, we just want to be cheap to compute and locally informative.

AS Components

- Ants: Construct solutions by moving on the graph

- Pheromone matrix : Shared memory on edges

- Heuristic : Problem-specific local guidance

- Probabilistic choice rule: Balances exploration vs. exploitation

- Evaporation : Forgetting mechanism

- Reinforcement: Deposit pheromone on good edges

We already saw how and works.

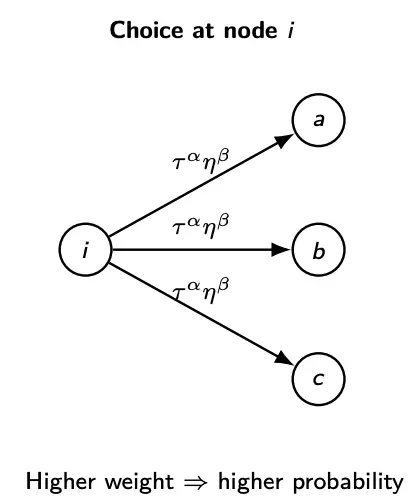

Probabilistic Move Rule

At node , an ant chooses the next node stochastically. It prefers edges with high pheromone , and edges with good heuristic . Because of randomness, we still allow exploration, such that non-best edges can be selected.

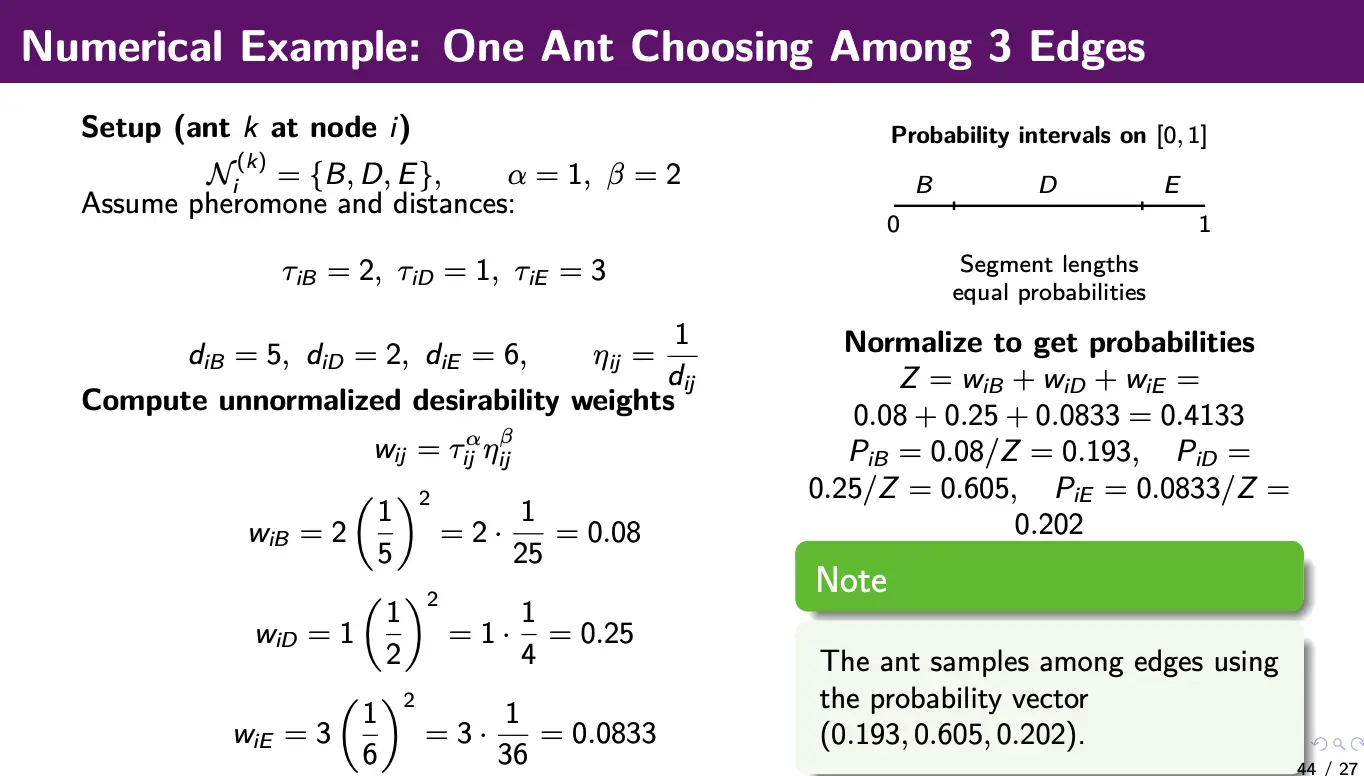

Strength of pheromone influence is controlled with parameter , and strength of heuristic influence is controlled with .

The transition probability is then determined as:

which is essentially just normalized probability for each path:

- : probability ant moves from

- : feasible next nodes (e.g., unvisited cities)

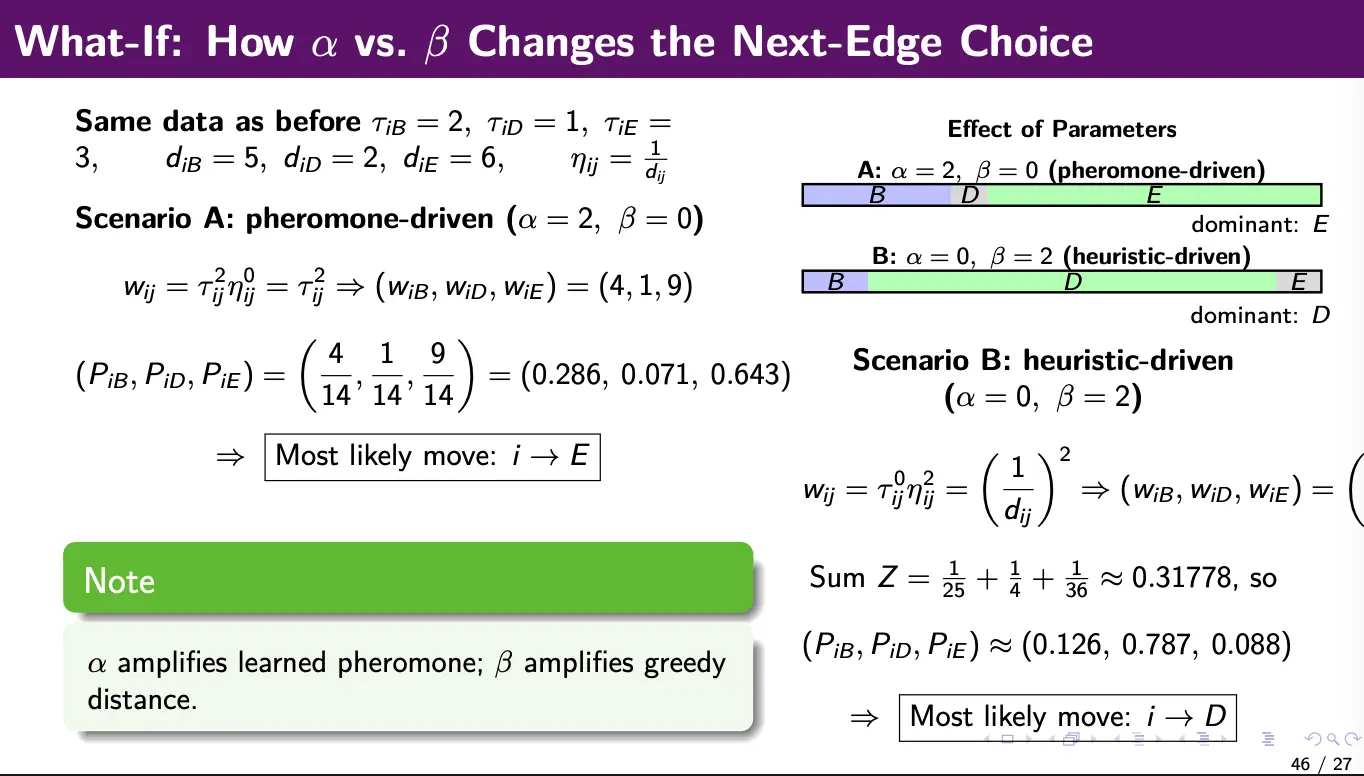

If , we are purely using heuristic (probabilistically greedy). If we are using , we are purely using pheromone (memory only).

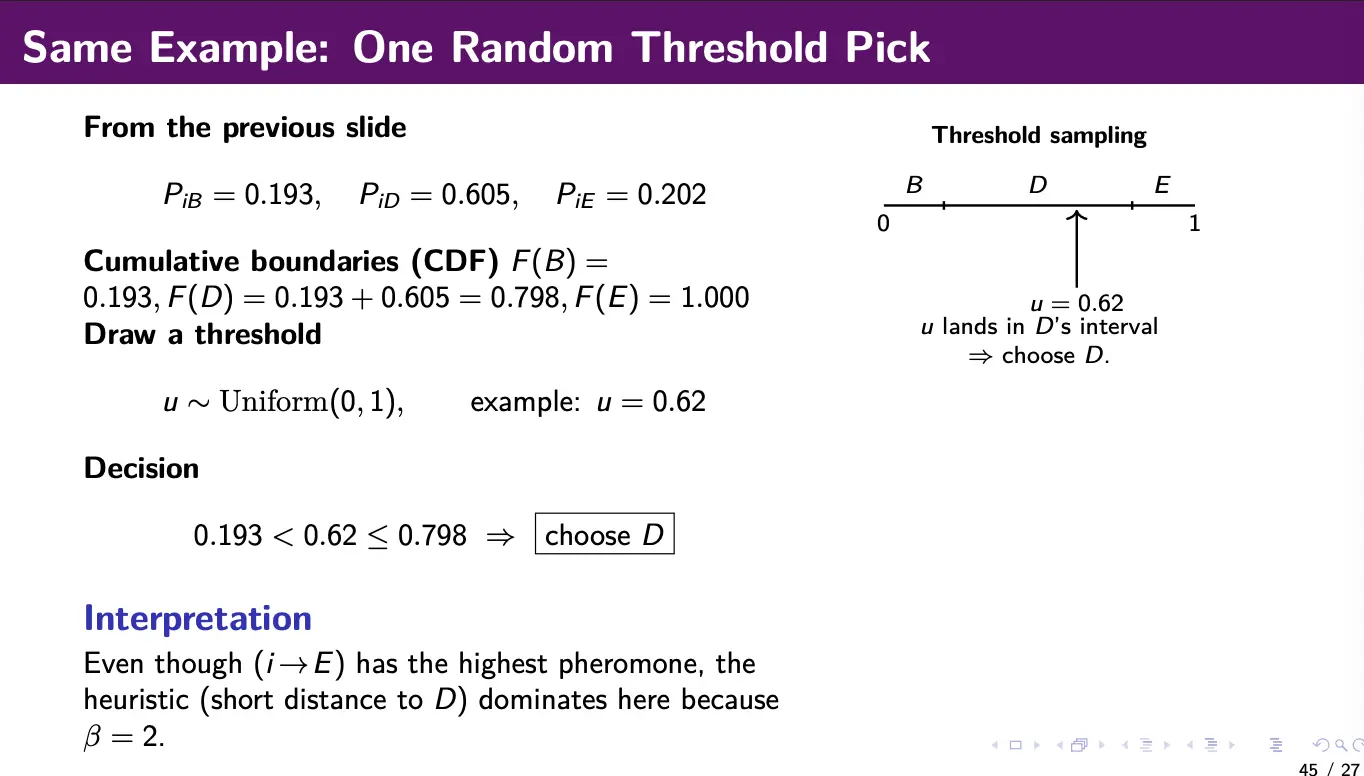

We can pick a next action through roulette-wheel sampling. We compute the transition probabilities using the formula above, draw a random threshold , then select the next city cumulative probability:

Numerical example:

Parameter effects:

- Increased means that ants follow pheromone trails more strongly (exploitation), exploiting experience

- Increased means that ants prefer locally good edges more strongly (heuristic greediness), exploiting problem structure

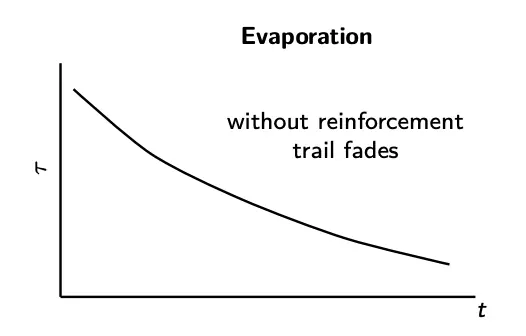

Pheromone Update

Pheromones are updated using some update rule. A generic global update is:

- is the evaporation rate. Higher means faster evaporation (more exploration).

- is the number of ants

- is the deposit (reinforcement) by ant , which is usually based on solution quality.

For example, a typical deposit rule is:

where is the tour/path cost, and is a pheromone scaling constant.

Thus, we can think of this pheromone update rule as combining the evaporation and reinforcement:

Stagnation

Note that a common failure mode is stagnation: if one trail becomes too dominant early, the colony may stop exploring alternatives. Some solutions to this include:

- Increasing evaporation rate

- Limiting pheromone values (Max-Min Ant System)

- Restarting pheromones when diversity collapses

- Injecting random/exploratory ants.

Convergence vs. Stagnation: For convergence, ants increasingly select the same edges, with pheromones concentrating on a few tours. For stagnation, no new tours are explored, with all ants following the same path (stuck in local optima).

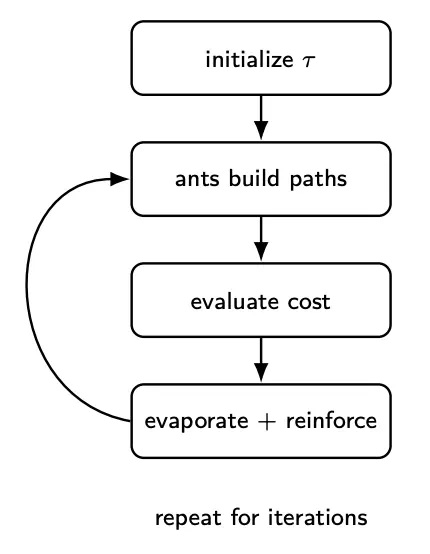

Walkthrough

For one iteration of ACO:

- Each ant constructs a path using

- Evaluate cost for each ant’s solution

- Evaporate pheromones

- Reinforce edges using good solutions

Early on, this will explore many edges (diversity). Later on, strong trails will emerge (exploitation).

This basic version we have introduced here is called the Ant System.