Many hard problems are solved in nature without central control, with complex global behavior arising from simple local rules.

- Stigmergy: indirect coordination where individuals (agents) communicate and cooperate by modifying their shared environment, rather than through direct communication.

An example of this is the ant path-finding problem: ants repeatedly travel between a nest and food source. The environment may contain multiple possible paths, and the ants have no global knowledge of the terrain. How do they discover the shortest path? While moving, they deposit pheromones. These pheromones persist for some before evaporating. Other ants sense pheromones and are biased toward stronger trails.

Ants choosing shorter paths return to the nest faster. A faster return means that more pheromones are deposited per unit time; longer paths accumulate accumulate pheromones slowly. This provides positive feedback, such that paths that are used more often become increasingly attractive; shorter paths reinforce themselves faster than long paths

On the other hand, remember that pheromones evaporate over time. Thus, poor or accidental paths lose reinforcement. This serves as negative feedback; evaporation prevents early mistakes from dominating forever.

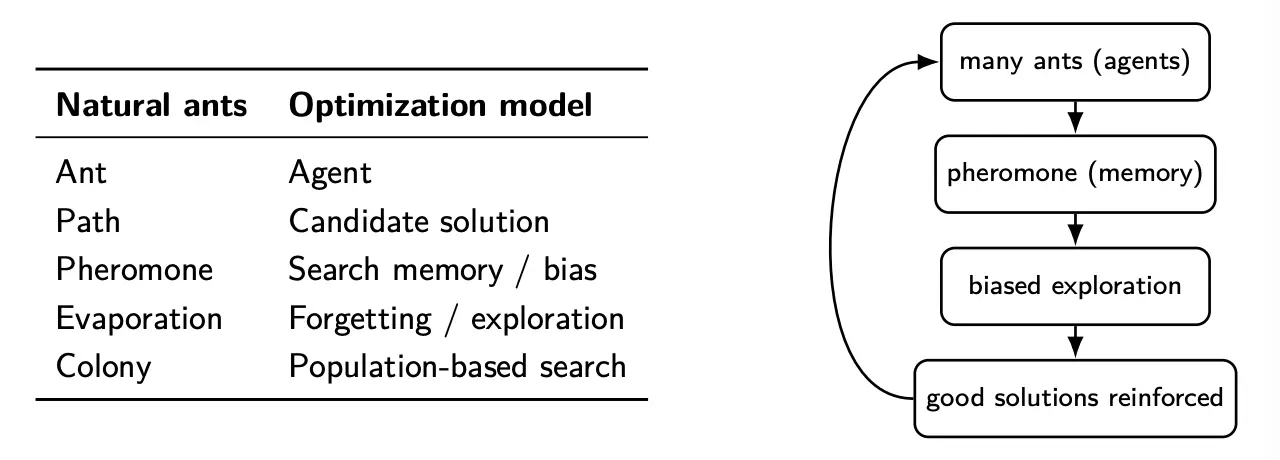

We can map this to optimization concepts. Optimization emerges from biased random exploration guided by collective memory.

We can have many ants that explore the search space in parallel, building solutions incrementally. Good solutions reinforce themselves via pheromones, and bad solutions fade away through evaporation.

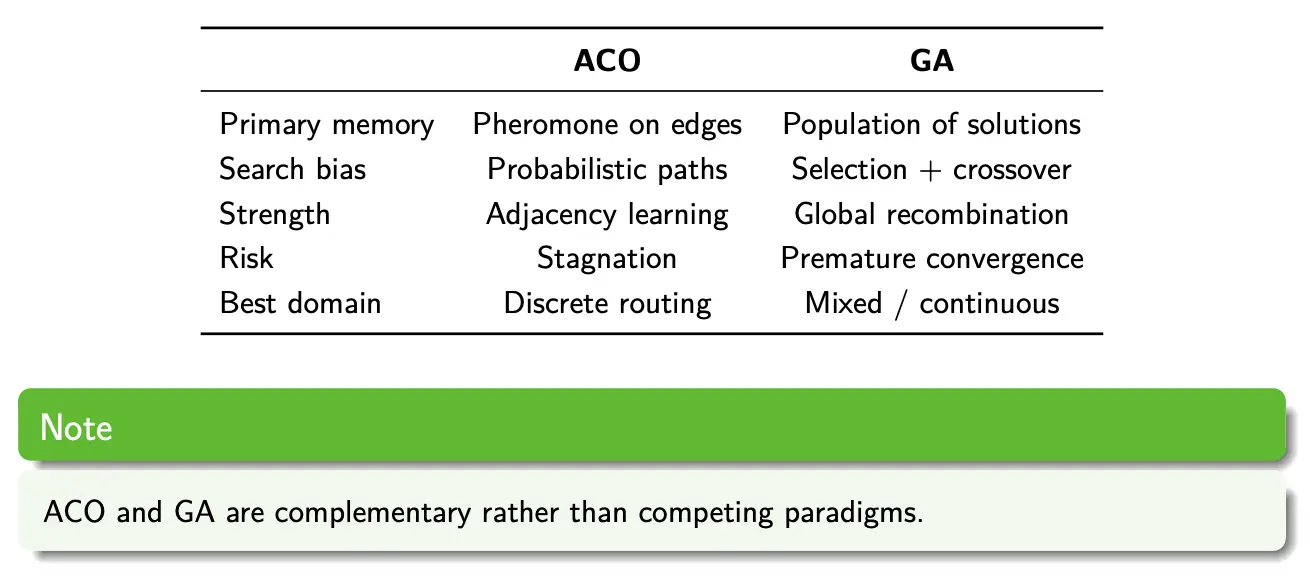

ACO vs. GA

ACO tends to outperform genetic algorithms when the problem is combinatorial (permutation, graphs), with a large and discrete search space. In these cases, solution quality depends strongly on adjacency structure, so good partial solutions like edges and subpaths should be reused.

- Examples: TSP, vehicle routing, network routing, scheduling with precedence constraints.

GA outperforms ACO when variables are real-valued or mixed-type. Especially for problems that benefit from recombining distance features, and building blocks are not adjacency-based. Or, when fitness evaluation is expensive and population size must be small.

- Examples: Continuous optimization, hyperparameter tuning, feature selection, neural architecture search

ACO Characteristics

ACO is most suitable for combinatorial optimization problems that are not feasible to solve using classical optimization methods if the problem size is large. These are mainly discrete optimization problems, although some ACO variants handle continuous optimization problems.

Advantages:

- Stochastic, population-based method (like GA)

- Retains memory of the entire colony instead of just the previous generation (as in GA)

- Less affected by poor initial solutions due to combinations of random path selection

- Can handle dynamic environments

Disadvantages:

- Mainly empirical, with very limited theoretical analysis. Proofs of convergence for some methods under certain conditions exist

- A lot of parameters, often requiring experimentation to select

- May take long time to converge