Content-Addressable Memory

A CAM is a system that can take part of a pattern, and produce the most likely match from memory. A CAM for instance would be able to interpret these:

intel__gent

nueroscience

War+++loo

pa$sv0rd

1,3, ,7, ,1

A CAM system can find an input’s closest match to a set of known patterns. It retrieves data by directly comparing input queries with stored memory locations. Hopfield networks mimic the behavior of CAM in a biologically inspired way using neural networks.

Hopfield Networks

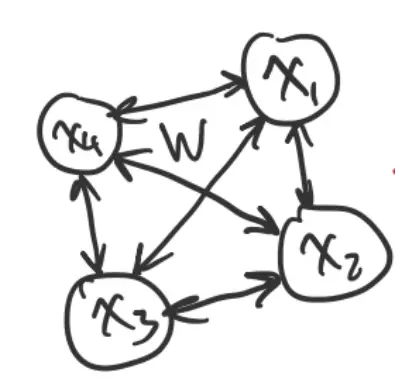

Suppose we have a network of neurons, each connected to all the others.

- is the connection strength from node to node . We assume that .

We want this network to converge to the nearest of a set of targets or inputs.

Each node in the network can be a or , such that .

Suppose each node wants to change its state so that:

If we have a pattern that we would like the network to recall, we could set the weights such that:

- between any two nodes in the same state

- between any two nodes that are different

So we’ve seen that setting the weights is easy if we have one target. But what if we have a bunch of different targets that we want to encode?

Given target network states, , each of length :

where the th component is calculated using the weights and biases as:

We then find the weights as:

- We call the average co-activation between nodes and . It is found by running through all the stored patterns and looking at the states of the nodes that the weight connects, and then connecting how many times they are in the same/opposite states and averaging.

Writing this in matrix form, we can write:

- is a column vector, and is a row vector.

This method works best if the network states, are all mutually orthogonal.

Hopfield Energy

Hopfield recognized a link between these network states and the Ising model in physics.

- Ising model: Lattice of interacting magnetic dipoles, each of which can be “up” or “down”. The state of each dipole depends on its neighbors.

Thus, Hopfield energy is a scalar number that we compute for any network state . The dynamics are defined such that updating neurons makes decrease, so that the network falls into low-energy states, which correspond to the stored memories/patterns.

Hopfield energy is defined as:

where .

Intuition:

- If and are the same sign, their product is positive. Then, we want to be positive. Since we have at the front, the whole term will be negative, such that a good state corresponds to low energy.

- The term reflects that there is a cost to each node being on/off. If the node is on, it reduces the energy by .

To minimize energy, we use gradient descent:

or

which is similar to the equation we saw earlier.

If :

If :

As a result, the gradient vector is:

where is a rank-1 matrix. We add the identity matrix to the right-hand side, so that the gradient of the diagonal weights is zero, to keep for gradient descent.

Over all targets, we have:

Thus:

where computes co-activation states between all pairs of neurons.

Because the input patterns are fixed, the co-activation matrix remains constant across iterations, so the gradient direction does not change, and repeated updates simply move linearly towards the steady-state solution, which is proportional to .

Example

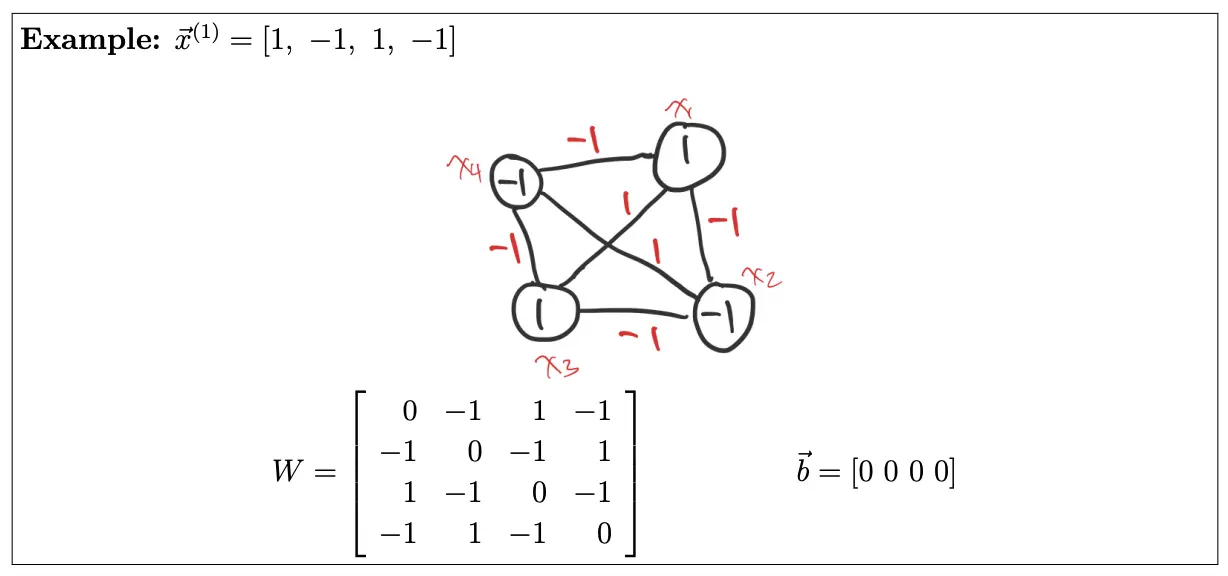

Let’s say we have neurons and target patterns.

Stacking them into the data matrix :

Now, we compute the coactivation states between all pairs of neurons:

Then:

Using this to do weight update:

Let’s start with and . Then:

and we take biases .

Now, suppose we have a noisy cue:

- (the first bit should be for this to match )

Using the update rule:

Note that Hopfield networks are usually asynchronous, such that only one unit is updated at one time.

We have:

Then:

So the state is now (the first bit flipped).

For the second one:

The state stays as .

For the third one:

The state stays as .

Finally:

Thus, after all the updates we have , matching the input .