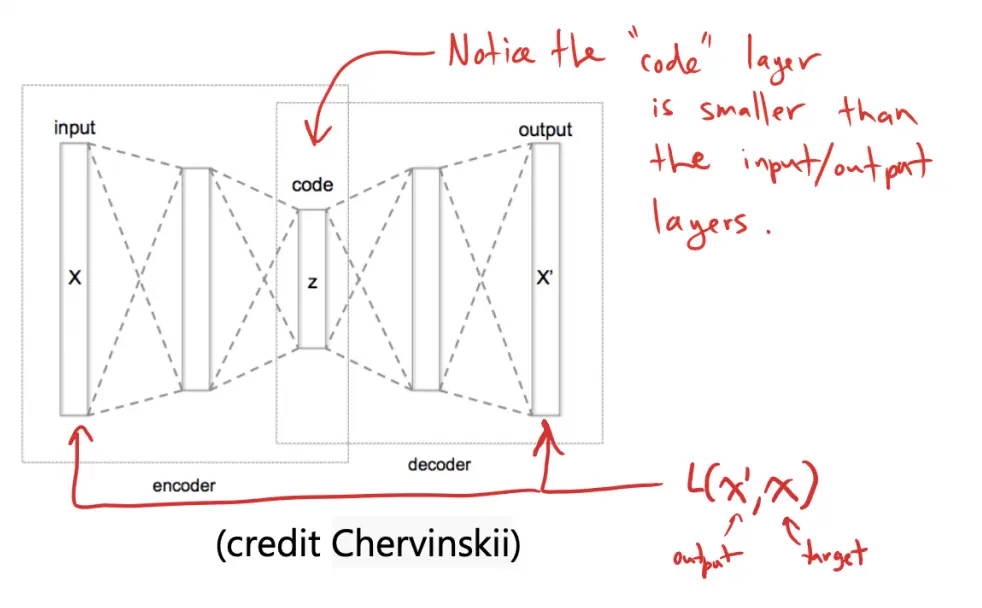

An autoencoder is a neural network that learns to encode (and decode) a set of inputs.

- The code layer is smaller than the input/output layers

The autoencoder consists of:

- An encoder that compresses the input into a lower-dimensional representation

- A decoder that reconstructs the original input from

The model is trained using a loss function that minimizes the reconstruction error:

where is the output (reconstructed input), and is the original input.

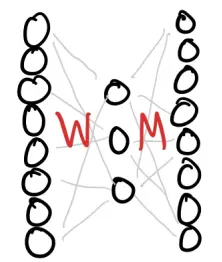

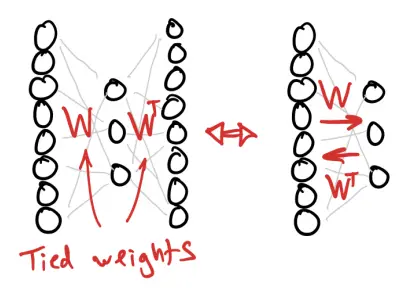

We can think of an autoencoder as just two layers: an encoder and a decoder. We can “unroll” it into 3 layers, where the input and output layers have the same size and have the same state, so that we have: input, hidden code, and reconstructed output.

Instead of:

we use

If we allow and to be different, then it’s just a 3-layer network. If we enforce that , then we say that the weights are tied.