How can we train our models so that they are harder to break with adversarial attacks?

Adversarial Training

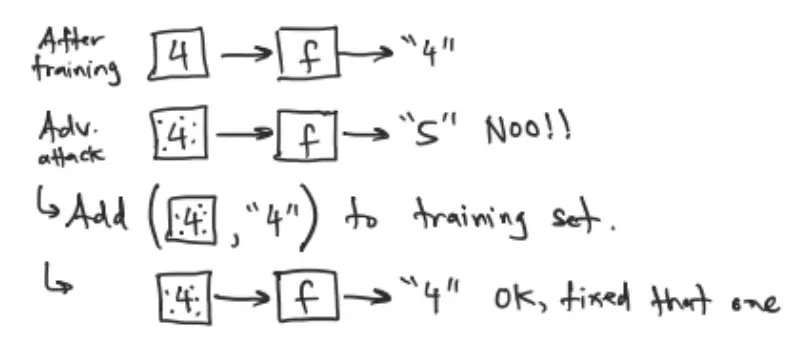

A basic idea is to adversarial samples to our training set and re-train. That helps somewhat, especially for samples that we added.

What if we built this process into our training? We can incorporate a mini adversarial attack into every gradient step while training.

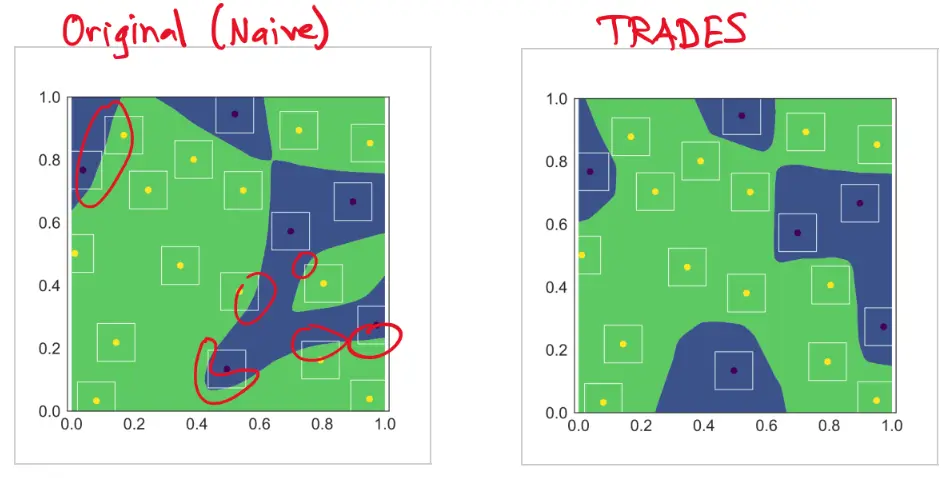

TRADES

‘TRadeoff-inspired Adversarial DEfense via Surrogate loss minimization” uses an idea like this.

Consider:

- Model

- Dataset with inputs and targets .

Then, is the predicted class of . The classification is correct if .

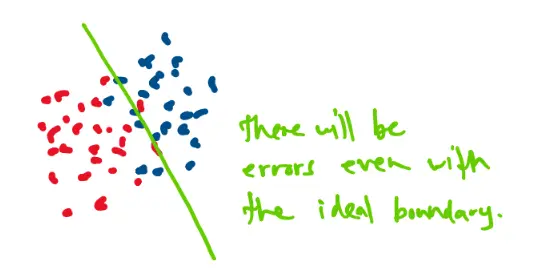

The classification loss can be written as:

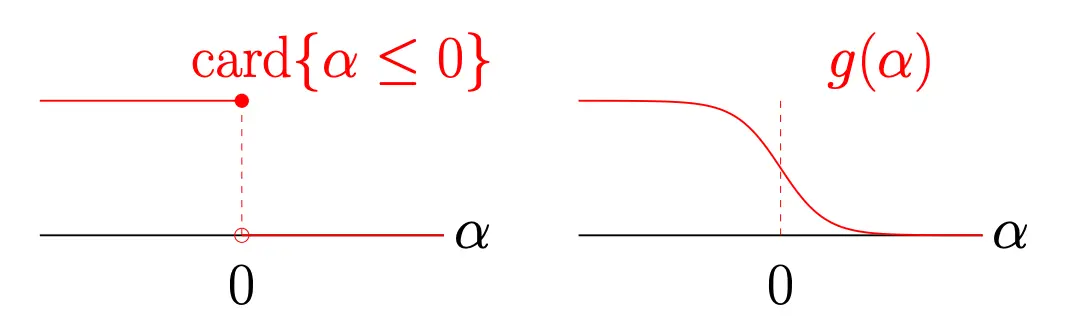

where we are using the indicator function to count how many times we get a wrong class.

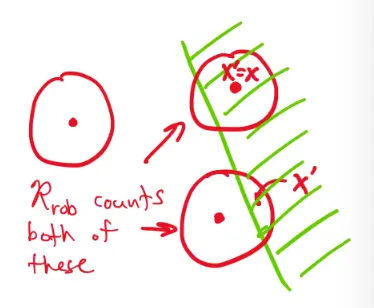

If we want to consider how our model will perform under adversarial attack, we consider the robust loss

This has some built-in pessimism, that looks for the worst-case in the neighborhood of .

Instead of counting misclassified points directly, to make it differentiable we approximate it use a surrogate loss function, :

Then, the natural loss becomes:

We can train a robust model with the combined loss

- The first term ensures that each is correctly classified

- Adds a penalty for models that put within of the decision boundary

Implementation:

- For each gradient descent step

- Run several steps of gradient ascent to find

- Evaluate the joint loss , where is a regularization parameter

- Use the gradient of the joint loss for each gradient step