The idea of PSO is to simulate the collective behavior of social animals, such as birds flocking or fish schooling. The interest here is that these teams have no leader, and individuals have no knowledge of the global behavior of the group. They have the ability to move together based on social interaction between neighbours.

Intuition

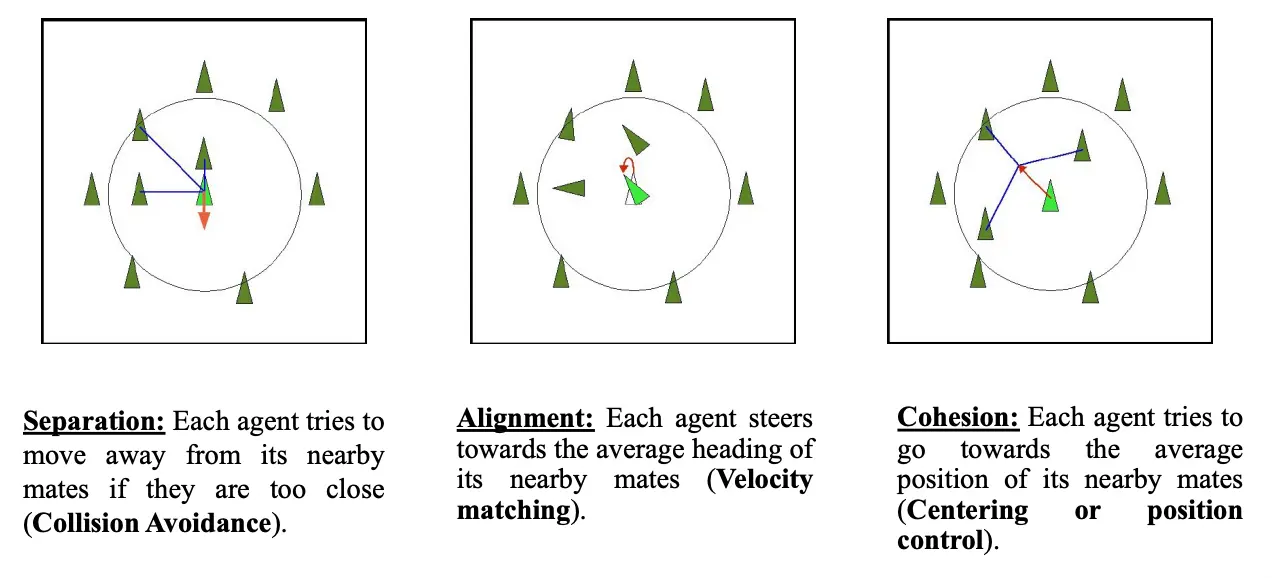

In bird flocking, there are three behaviours we need to account for:

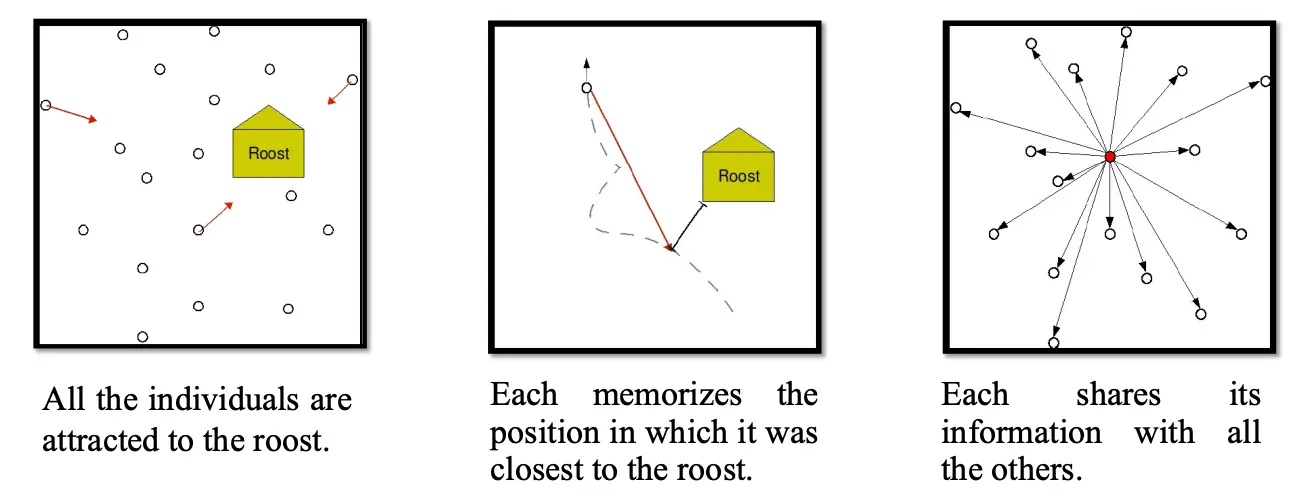

Another addition we can make is the roost (place for birds to rest) as an attractor for the birds. The roost is in the form of a memory of previous own best and neighborhood best positions (referred to as a cornfield). These two best positions serve as attractor, and by adjusting the positions of the flock proportion to the distance from the best positions, they converge to the goal.

The key to realize here is that if the distance to the roost was changed by some unknown function, the individuals land on the minimum. PSO was born from this idea.

- Particle means individual or candidate solutions

- Swarm is used because the paradigm is a simplified version of bird flocking

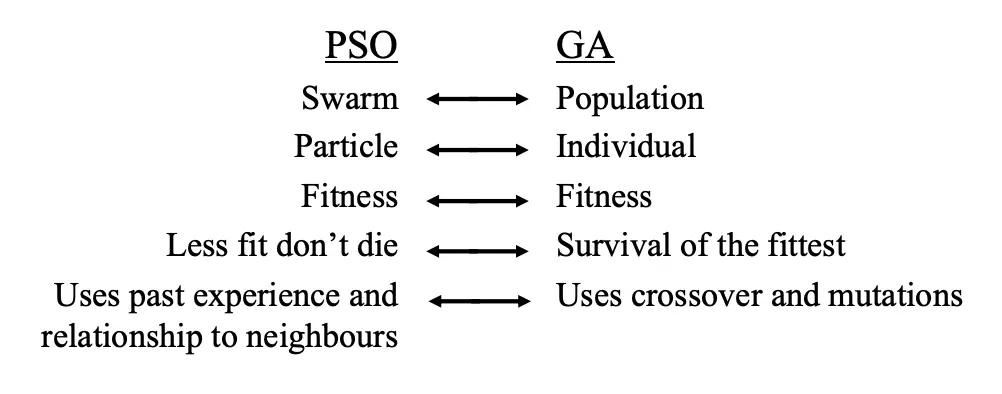

PSO vs. GA:

Motion

Each particle holds:

- Current position

- Current velocity

- Personal best: the best position it achieved so far,

- Neighborhood best: best position achieved by particles in its neighborhood,

- If the neighborhood is the whole swarm, the best achieved by the whole swarm is called the global best,

- If the neighborhood is restricted to few particles, we call it the local best

- See PSO Neighborhood Topologies

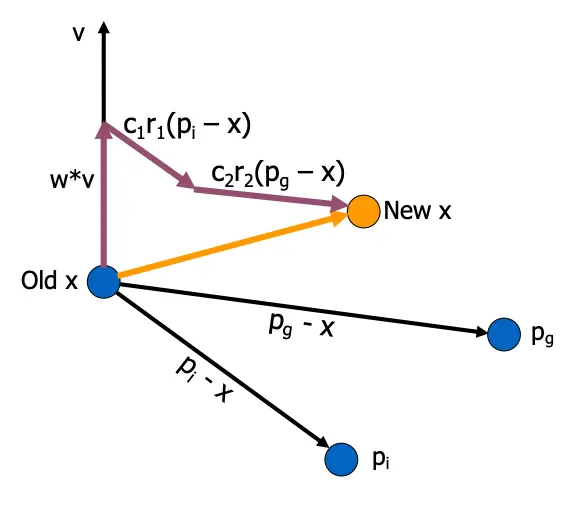

Each particle adjusts its velocity to move towards its personal best and the neighborhood best. After the velocity is updated, the particle adjusts its positions. This is governed by the following equations of motion:

- is the velocity of particle

- is the inertia weight

- are acceleration coefficients,

- are randomly generated numbers in

- is the position of the particle

- is the iteration number

- and are the particle number and dimension

The inertia term reflects the fact that a particle cannot suddenly change its direction of movement. The and factors balance the weights in which each particle trust its own experience (cognitive) and trust the swarm component (social).

Note that the random number are generated for each dimension and not for each particle. (If the function we are optimizing has 3 variables, the particle will have 3 dimensions). If the numbers are generated for each particle, we call it linear PSO, which is usually sub-optimal to PSO.

Another important factor is to set a maximum velocity . If this is too high, particles can fly past optimal solutions; if it’s too low, particles can get stuck in local optima. Thus, this is usually set according to the domain of the search space.

After the motion update, each particle updates its own personal best (assuming a minimization problem):

and the swarm updates its global best:

Algorithm

Synchronous

- Initialize the swarm

- While termination criteria is not met:

- For each particle:

- Update particle velocity

- Update particle position

- Update particle personal best

- Update Nbest,

- For each particle:

Asynchronous

- Initialize the swarm

- While termination criteria is not met:

- For each particle:

- Update particle velocity

- Update particle position

- Update particle personal best

- Update Nbest,

- For each particle:

In the asynchronous version, the neighborhood best update is moved into the particle update loop. Asynchronous tends to work better because particle use more up-to-date information.

Some potential termination criteria: Max number of iterations, max number of evaluations, acceptable solution found, no improvement over a number of iterations.

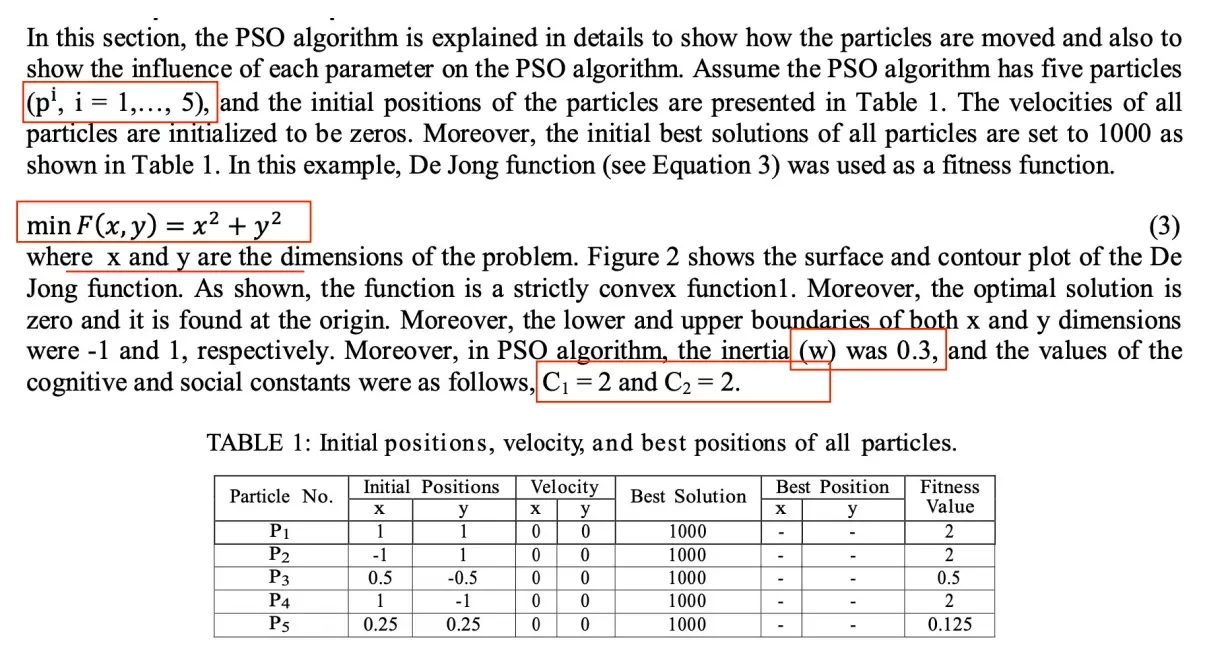

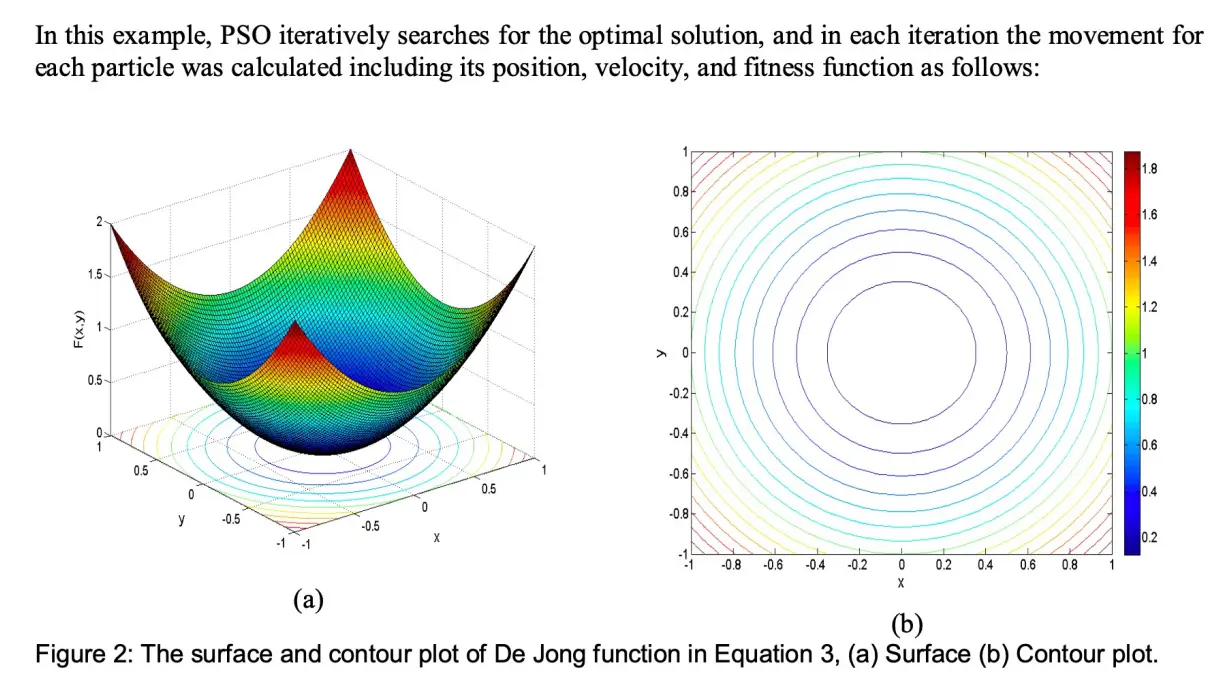

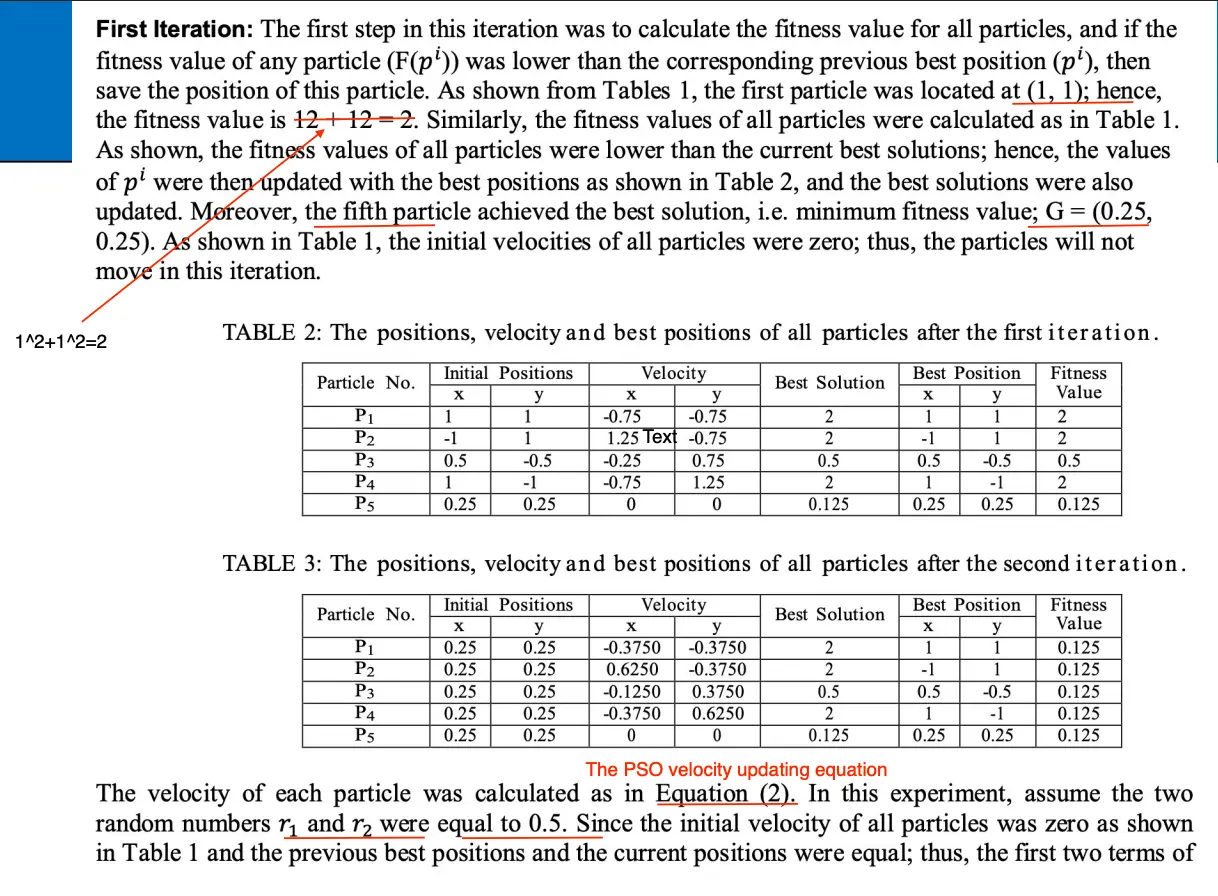

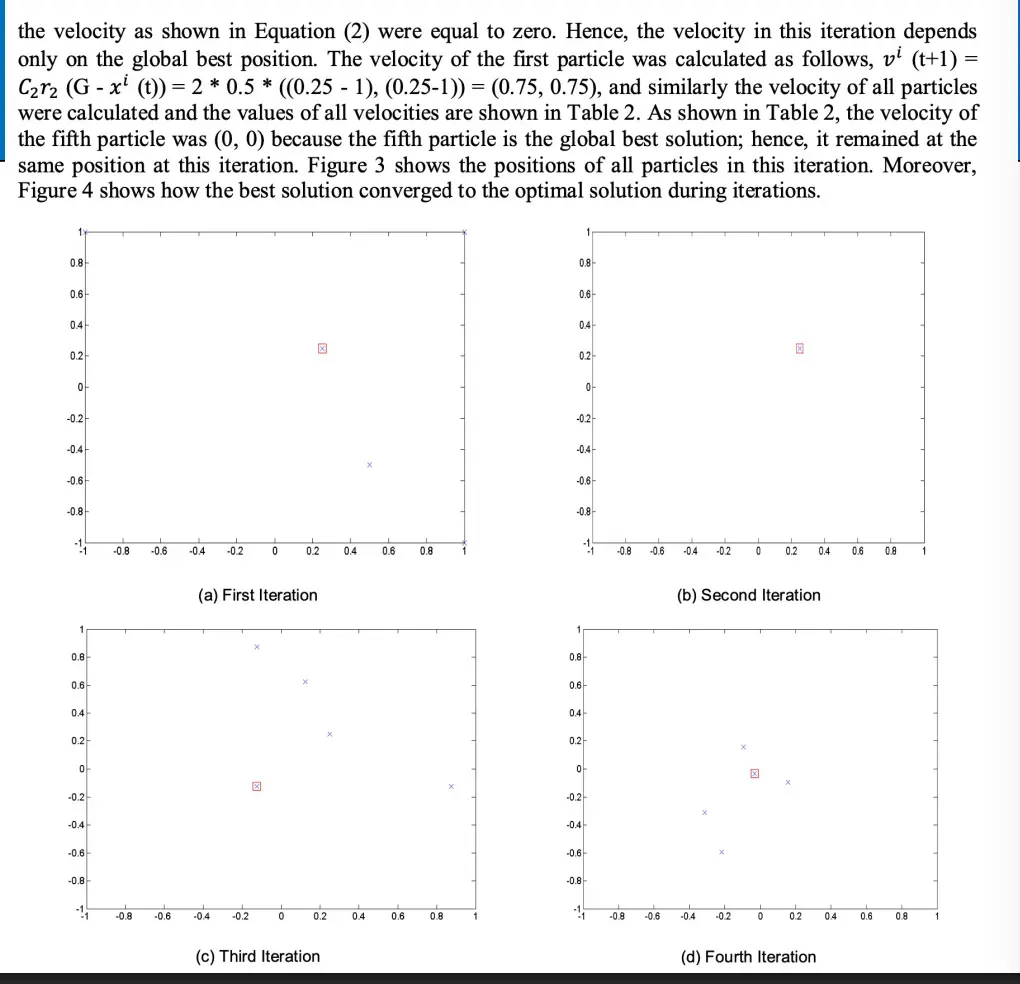

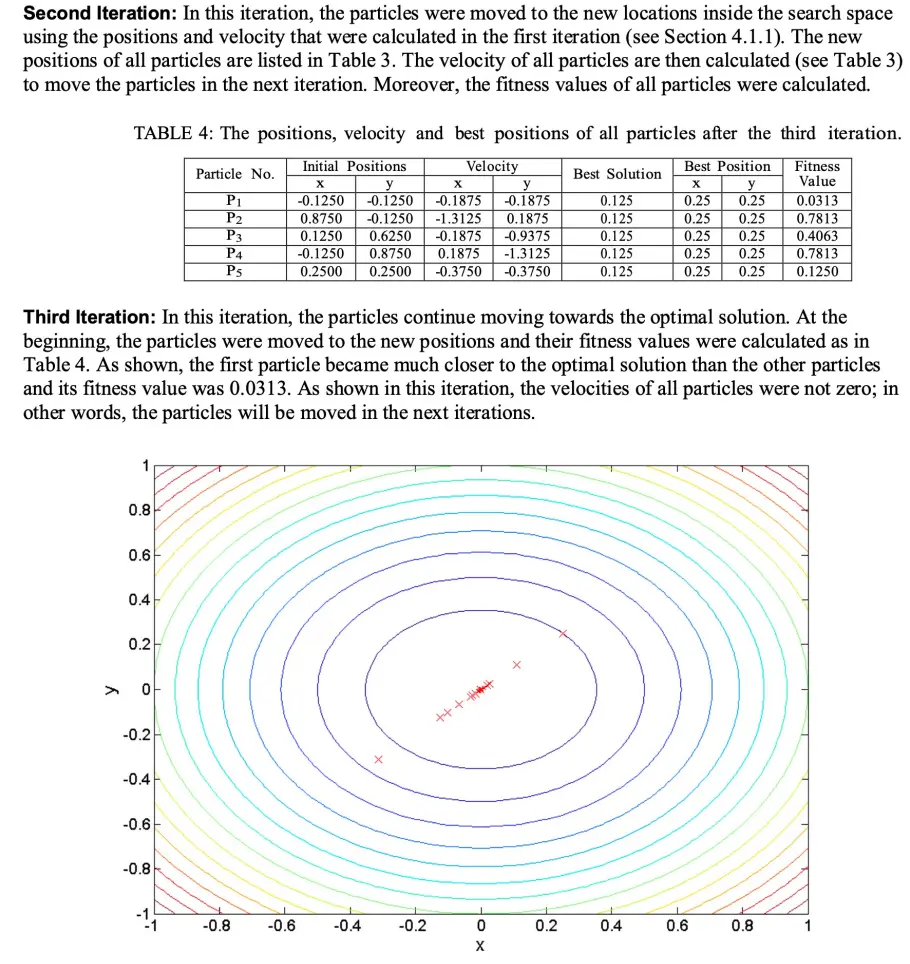

Example

Example

Behavior

PSO typically exhibits rapid early convergence and a slower refinement phase. governs the explore/exploit behavior, such that a large means more exploration and a smaller means more exploitation.

Note that we often use a velocity magnitude limit

to prevent particles from flying out of the search domain.