Control problems are attractive for GP because the controller is naturally a program. A controller maps state to action:

Thus, we can use GP to evolve threshold logic, arithmetic combinations of sensor readings, nonlinear feedback laws, and conditional policies.

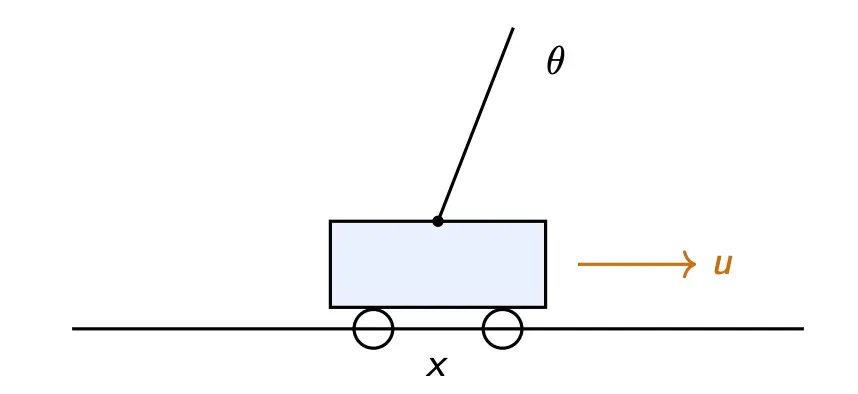

Consider the classic cartpole problem:

Failure occurs if or . Basically, the pole must stay near upright and within the track.

The state is:

GP evolves a controller of the form:

We can have the output either be a discrete force , or a continuous form in a bounded interval.

We can then define a survival-based fitness:

or a reward-based fitness:

where we are basically rewarding 1 for each step staying alive, with penalties for large angles and displacements. This encourages both stability and control stability.

Control is a hard problem!