A transformer is a feedforward network, with an additional mechanism (attention) by which it is able to focus on parts of the input that harbour latent relationships.

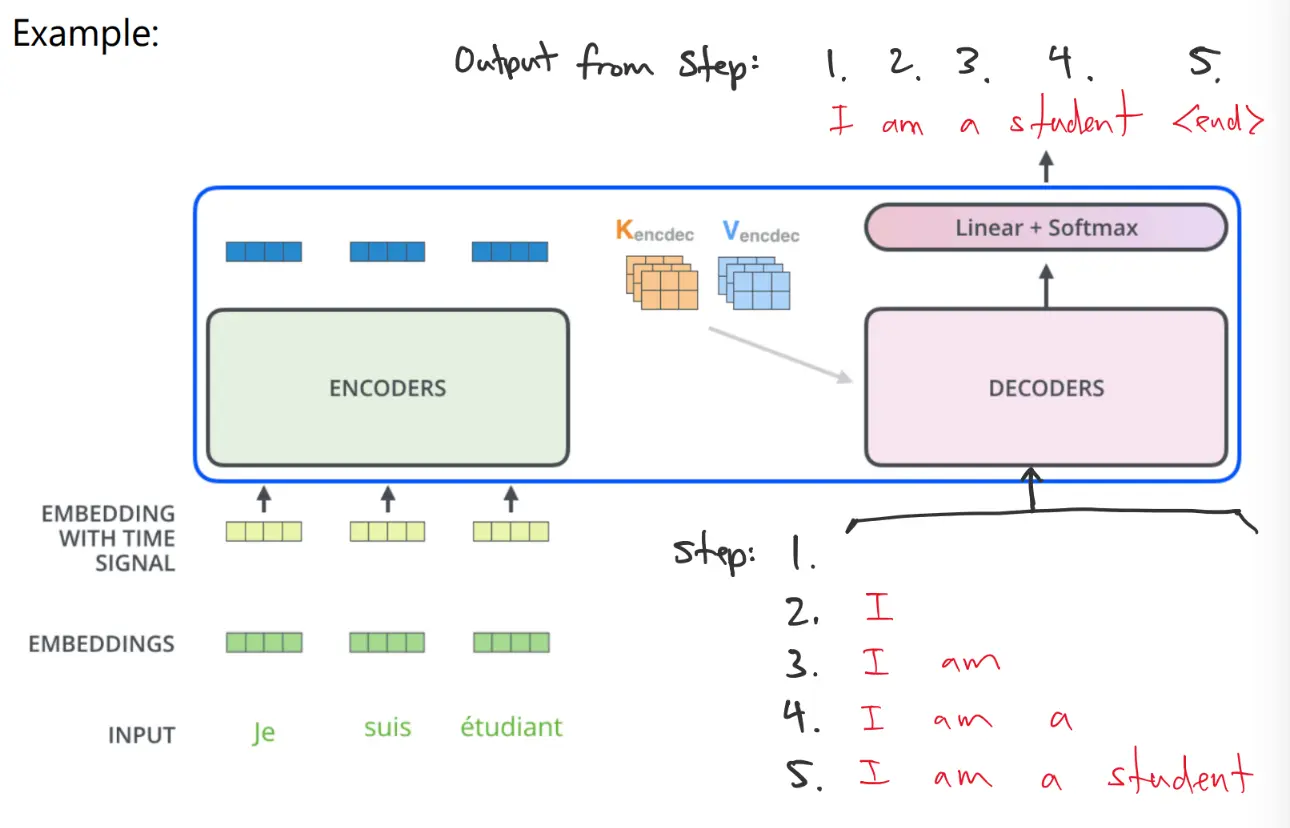

It is often used for sequence-to-sequence transformation, like language translation or generation. In general, it can be used to encoded sequences, like proteins.

Sequence Embedding

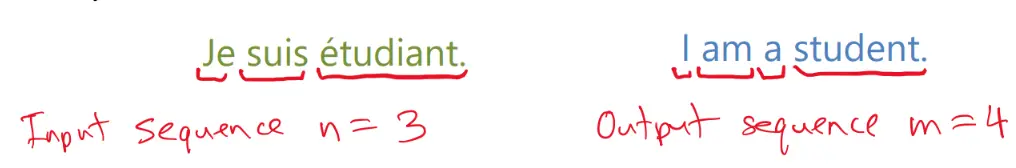

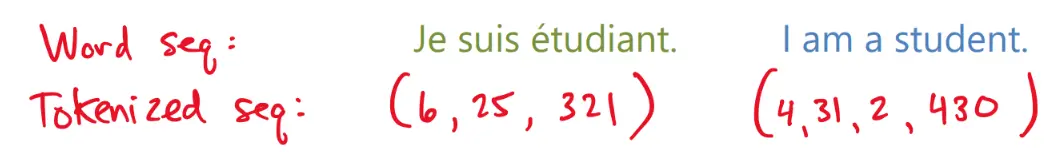

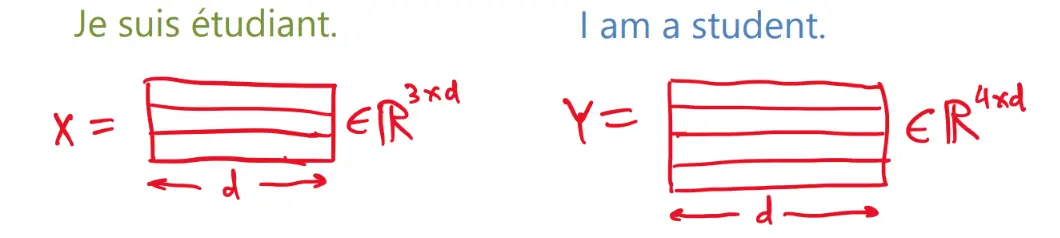

We start with a sequence of inputs, and a corresponding sequence of outputs, both of which we break up into individual elements, like words.

Each language has a vocabulary, in which each word is given a different number. This is called tokenization.

Each word sequence is converted to a token sequence:

Finally, each token is converted to a vector using an embedding. Suppose the embedding vectors have dim .

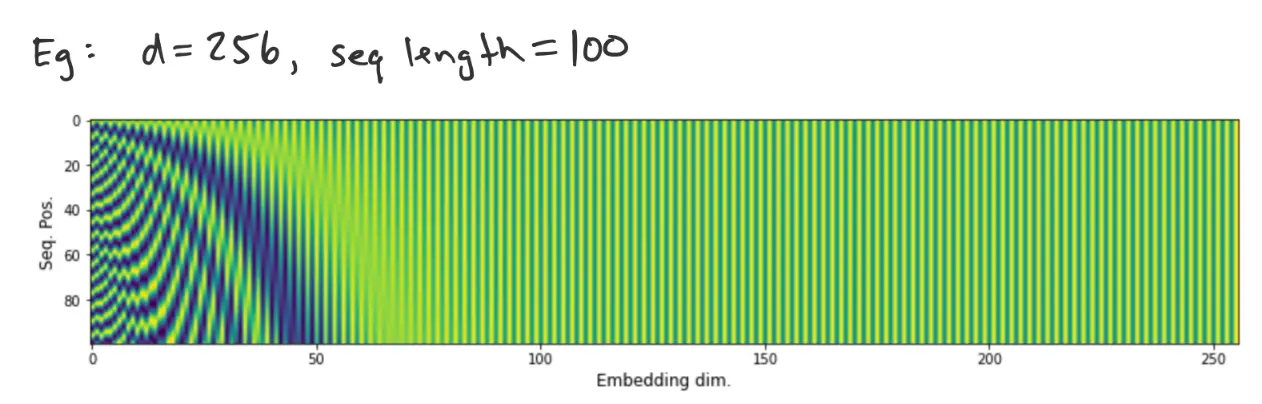

Positional Encoding

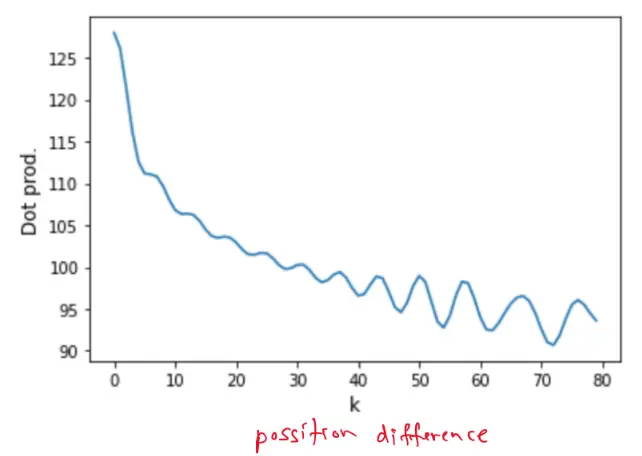

The order of the words/tokens/vectors in the sequence matters, so we need a way to indicate their relative order.

Given embedding dimension and vector index :

- Even index elements:

- Odd index elements:

Then:

Note that:

is a constant function of .

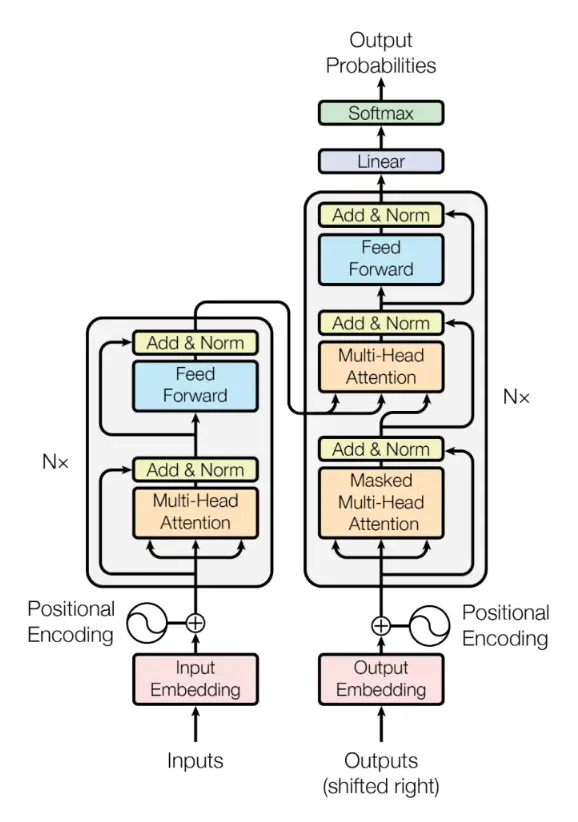

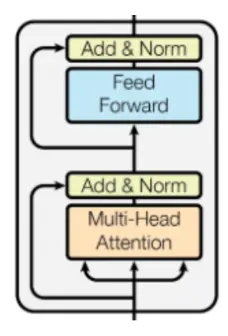

Encoder

The encoder module is a collection of layers.

Attention

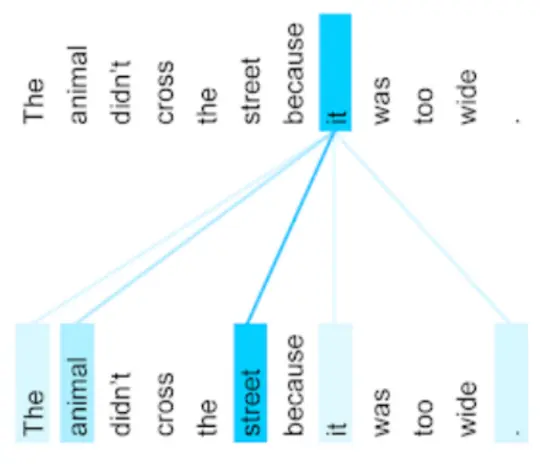

Attention calculates the similarity between different elements of the sequence and uses them to compute weights for elements of the sequence. The attention mechanism shortens the paths between related inputs in the sequence. For an attention layer, every output vector contains input from every vector in the sequence.

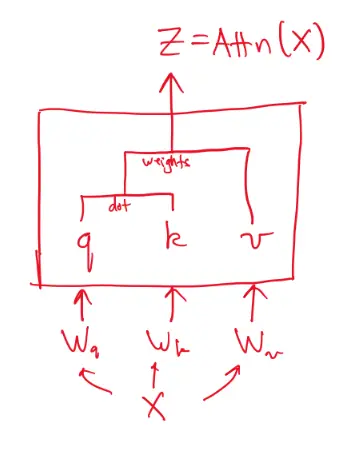

For each input vectors, we create 3 vectors: query, key, value.

Compare each query vector to each key vector (dot product):

is vector ‘s score for vector .

These scores then get softmaxed and normalized to get the attention scores:

The output of this layer is a sequence of vectors. Each vector is composed of a weighted average of the value vectors, weighted by their corresponding scores.

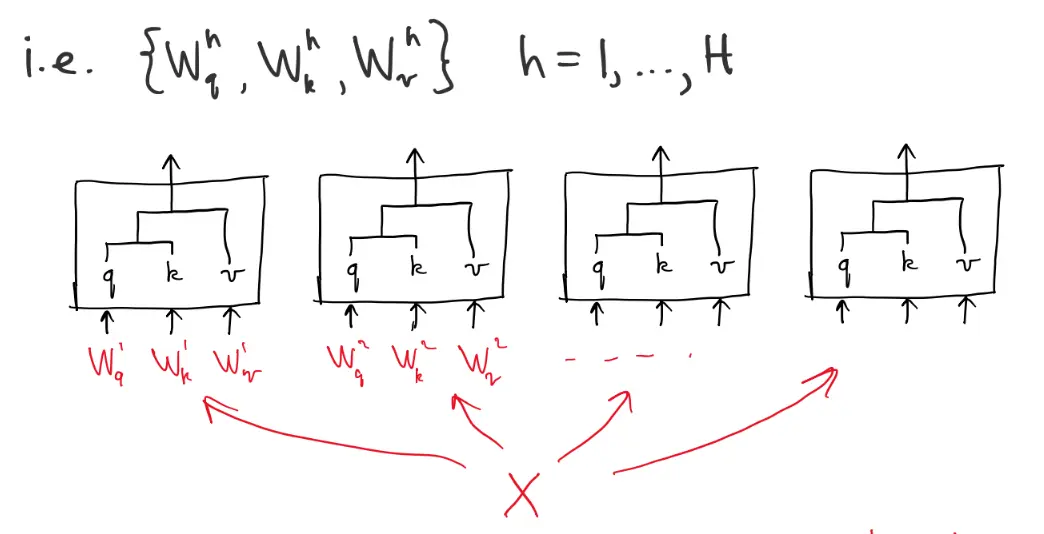

Multi-Head Attention

It is common to have multiple attention “heads” in a layer, by simply duplicating the whole attention structure, and running it in parallel.

All of these weight matrices can be lumped into one giant weight matrix, and then we just do

Add & Norm

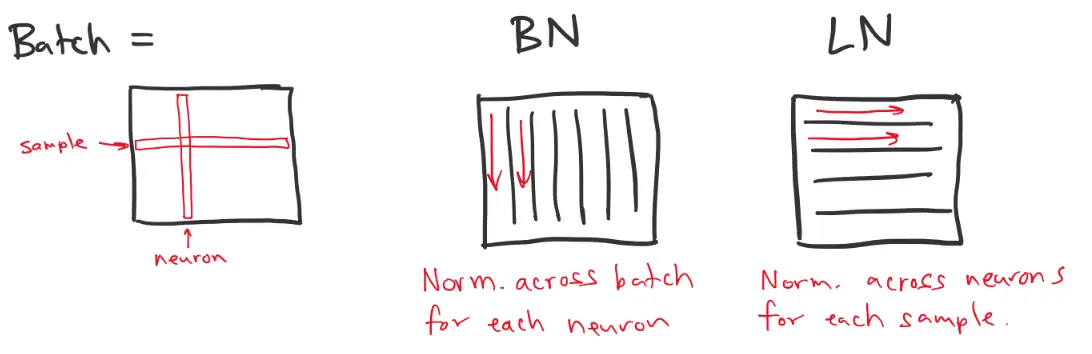

First, we perform layer normalization:

This is similar to batch normalization (BN), except in BN you normalize the input to (or output of) a single neuron over a batch. In layer normalization (LN), you normalize the inputs to a layer for each sample. This is essentially the transpose of BN on a batch.

Then, like in batchnorm, we apply a learned bias and gain:

where and are the mean and standard deviation of the layer ().

Finally, there is a residual connection:

Feed-Forward Layer

The FF layer simply applies weights and biases, and is combined with a residual connection, and followed by another layer normalization.

This encoder module is repeated a number of times in series. The output of the encoder is a sequence of vectors, the same length as the input sequence.

Decoder

The decoder is made up of many of the same parts as the encoder. However, the second block of MHA receives its query and key from the encoder. In addition, the “outputs” are revealed to the decoder one word (vector) per step. This is done by masking out the future words.