So far, we’ve built neural networks by prescribing connection weights. We decide what computation we want, and then choose decoders to implement the required transformation.

Most brains don’t have an oracle to solve for connection weights. It turns out all we need is an error signal.

How can that error be used to update the connection weights?

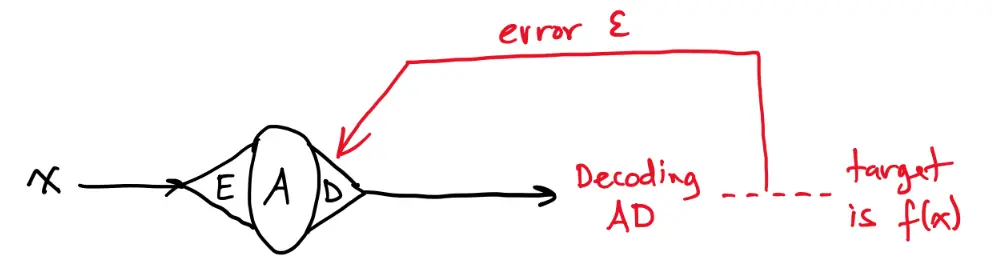

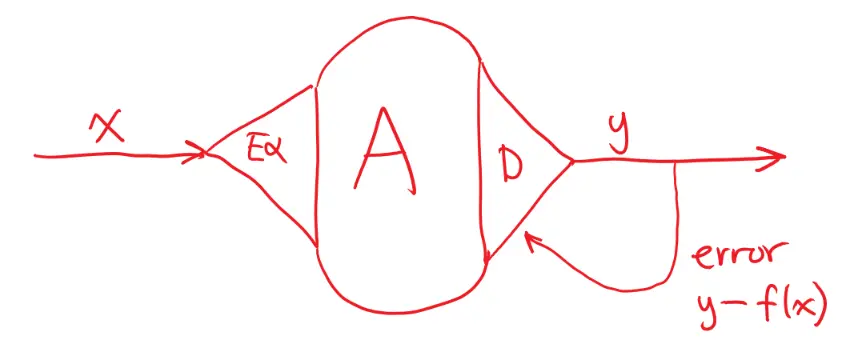

Let’s start with a simple model:

Consider the decoding error:

To minimize this error iteratively, we can use gradient descent.

This is the gradient vector that points “uphill” for the error function, or in the direction of greatest rate of increase. To move to a position () with lower error with lower error, we move in the direction opposite the gradient vector.

More generally:

- is the learning rate

- is the pre-synaptic neural activity